Original source: Adobe Marketo Engage User Groups

This article is an editorial summary and interpretation of that content. The ideas belong to the original authors; the selection and writing are by Marketo Ops Radar.

This video from Adobe Marketo Engage User Groups covered a lot of ground. 8 segments stood out as worth your time. Everything below links directly to the timestamp in the original video.

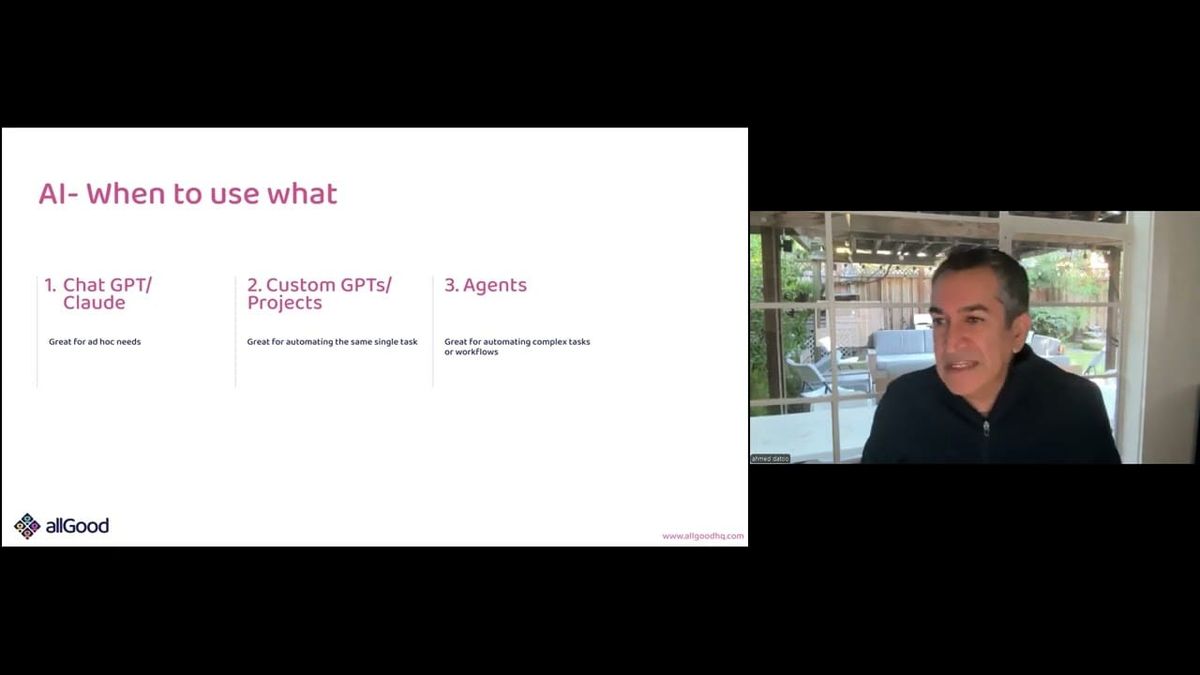

If your team is still debating whether to use AI for marketing operations tasks, the more productive question is which tool tier fits the task. This framework gives you a repeatable way to answer it.

A Practical Framework for Choosing Between Ad Hoc AI, Custom GPTs, and Agents in Marketing Ops

A presenter introduced a decision framework for selecting the right AI tool tier based on task complexity and frequency: conversational AI for one-off questions, custom GPTs or Projects for repeatable single-step tasks, and agent pipelines for complex multi-step workflows that chain outputs across systems. The framing is directly applicable to how marketing operations teams evaluate AI adoption without defaulting to the most complex option available.

The session used this taxonomy to set up a five-part data hygiene workflow — country code standardization, phone normalization, job title translation, job leveling, and automated list upload — demonstrating that each cleaning step maps to a different tool tier based on its complexity and interdependency. The contact washing machine framing makes a persuasive case that data quality is the prerequisite for any downstream personalization or scoring strategy.

For practitioners weighing where to begin with AI in their stack, this tiered model offers a low-friction entry point: start with repetitive single-task automation via custom GPTs before committing to agent infrastructure. The explicit acknowledgment that monolithic prompts break down under complexity — and that modularity improves reliability — is a useful design principle for anyone who has tried and failed to consolidate too much logic into one prompt.

"Agents are really great for automating super complex tasks. If you have a task that may involve a really long prompt, it's actually better to break it up into smaller prompts and use agents — it's just more reliable."

Using Custom GPTs with Reference Data to Standardize Country Codes on Inbound Lead Lists

A practitioner demonstrated loading ISO country code reference data directly into a custom GPT so that any uploaded lead list — from trade shows, content syndication, or partner uploads — automatically receives a standardized two-letter country code column. The key design detail is embedding the reference lookup inside the GPT configuration itself, making the tool self-contained and reusable across list uploads without re-prompting.

One practical constraint surfaced in the demo: entries with non-Latin characters (such as country names written in Chinese script) were flagged as unrecognized rather than silently mapped or dropped. This failure mode is actually useful — it surfaces records that need additional treatment, in this case translation, before the country code step can complete cleanly. The workflow was intentionally designed so that this unresolved record flows into the next cleaning step.

For teams regularly processing inbound lists from international field events or third-party sources, this pattern reduces manual cleanup time and produces a consistent field that downstream Marketo smart lists and routing rules can reliably target. The replicability is high: the only non-obvious step is sourcing and formatting the ISO reference data for the GPT knowledge base.

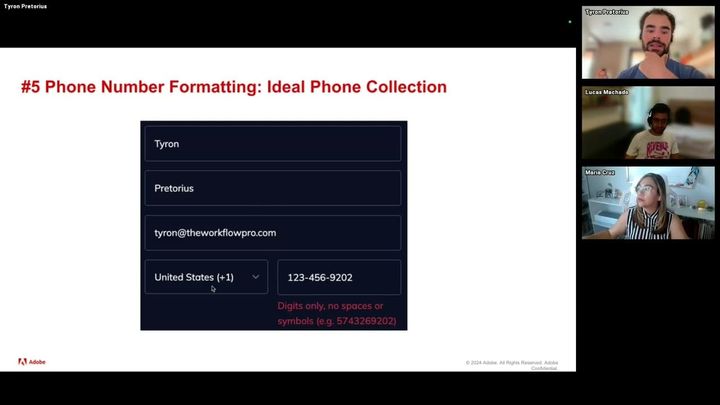

Normalizing International Phone Numbers to E.164 via Custom GPT — Including Invalid Number Detection

A practitioner demonstrated a custom GPT configured to normalize international phone numbers to the E.164 standard, producing a new column on the input spreadsheet with reformatted values. The demo surfaced a practically important behavior: rather than silently reformatting or dropping an invalid number, the GPT flagged the record explicitly, creating a clear handoff point for enrichment or manual review.

This flagging behavior matters operationally. Invalid numbers that are silently passed through degrade dialer performance and complicate sync quality with CRM systems. Having the cleaning step surface bad records rather than obscure them keeps the downstream data contract cleaner and gives the operator an actionable list to route to an enrichment provider.

For teams managing international lead volume with mixed phone formatting — country codes present in some records but not others, local versus international formats — E.164 normalization is a prerequisite for reliable dialer integration and CRM sync. The custom GPT approach makes this a one-step repeatable operation rather than a manual or formula-based transformation.

"One of the phone numbers was invalid, so rather than formatting it, the custom GPT actually spits out and says hey, this number is not a real number — at which point you can invoke your enrichment provider to go through the process of enriching those leads."

Translating Non-English Job Titles Before Lead Scoring Prevents Silent Lead Loss in International Programs

A presenter demonstrated using an LLM-based project to translate non-English job titles — including French, Japanese, and Chinese — into English as a prerequisite step before lead scoring or job level classification. The core problem framed is that keyword-based scoring and routing rules written in English silently skip records where the job title field contains non-Latin characters or foreign-language text, meaning those leads are never scored, never routed, and never followed up on appropriately.

The demo showed the LLM writing code to process the spreadsheet inline, identifying source languages and translating titles while flagging the detected language — a level of transparency that makes the output auditable. The pattern is applicable to any team running international programs where inbound lead forms may capture job titles in the submitter's native language rather than English.

The implication for scoring and routing design is significant: if your rules are entirely English-language keyword dependent, your coverage rate for international leads is likely lower than you think. Translation as a preprocessing step, applied before leads enter scoring or routing logic, is a structural fix rather than a workaround.

"These would be leads that we're left behind — they're not being scored, they're not being routed to appropriate people, and these could be some monstrous leads with the right persona titles that are just getting missed."

LLM-Based Job Level Classification Replaces Brittle Smart-List Keyword Rules — and Handles Titles Your Rules Never Will

A practitioner made a direct case for replacing keyword-based Marketo smart-list job level classification with LLM-based categorization, demonstrating Claude classifying translated job titles into five configurable job level categories. The fundamental argument is maintainability: keyword smart lists require constant manual updates as new title variations appear, and invariably accumulate a large 'other' bucket that represents classification failures rather than genuinely uncategorizable records.

The LLM-based approach works by providing the model with a definition of each job level category and letting it reason about where a given title fits — no keyword enumeration required. This handles creative or ambiguous titles (such as 'Head of Marketing') that don't map cleanly to any keyword set, and it scales across languages when combined with the translation step. The five-category structure shown is intentionally minimal and customizable; teams can adapt categories to match their existing persona frameworks.

For teams that have already built keyword-based job level logic, the practical entry point suggested is not a full replacement but augmentation: run the LLM only against the existing 'other' catch-all bucket to recover leads that the rules already missed. This reduces the scope of change while delivering immediate value on the records most likely to be misclassified.

"The way I used to do this in Marketo is I'd create a smart list which ended up becoming really complex — a series of if the job title contains this or this or this, and make sure to exclude this, this, this. We were constantly maintaining that list because people are really creative with their job titles."

Two Techniques for Managing LLM Accuracy and PII Risk in Marketo Data Cleaning Workflows

A Q&A exchange surfaced two high-value operational techniques for teams facing blockers on LLM-based data cleaning. On PII, the approach shared was to strip personally identifiable fields — name, email — from the spreadsheet before upload, retaining only the Marketo ID and the fields requiring cleaning. Because Marketo's API and list upload both support matching on Marketo ID, cleaned data can be reloaded without the email address ever leaving the organization's systems. This sidesteps the most common security team objection without requiring enterprise AI licensing or legal review.

On accuracy, the session introduced few-shot prompting as the primary mechanism for improving LLM job classification reliability. Rather than accepting initial classification errors, practitioners should run a sample batch, identify misclassified examples, and add those specific examples — labeled with the correct category — directly into the prompt. The model's accuracy improves substantially with even a small number of concrete examples. A complementary strategy is to apply LLM classification only to the 'other' catch-all bucket from existing keyword-based rules, where misclassification rates of 10–30% are commonly observed — a high-value, lower-risk scope for initial deployment.

Both techniques address the two most common objections to LLM use in marketing operations contexts: security concerns and accuracy concerns. The PII workaround is immediately deployable without IT approval in most organizations; the few-shot accuracy improvement is iterative and requires no additional tooling.

"I just end up uploading the Marketo ID — I don't have the first name, last name, nor email. If I've got all the other fields which are non-personally identifiable, I can run it through the LLM, clean it, and then upload it back into Marketo."

Chaining Data Cleaning Steps into a Single Agent Pipeline That Writes Directly to Marketo via API

A practitioner demonstrated a LangChain-based agent pipeline that sequences all four data cleaning operations — country code standardization, phone normalization, job title translation, and job leveling — and concludes by calling the Marketo API to upload the cleaned list directly, eliminating the manual download-upload cycle between steps. Each operation runs as a discrete agent, preserving the modularity that makes individual steps easier to tune and debug, while the pipeline architecture handles the handoffs automatically.

The demonstrated approach requires development resources to implement: LangChain is an open-source framework, and constructing the agent definitions, sequencing logic, and Marketo API integration is a technical build, not a configuration task. This limits near-term applicability for teams without engineering support. However, the conceptual value is high: the demo establishes what fully automated list cleaning looks like end-to-end, and the modular agent design means individual steps can be added, removed, or reordered without rebuilding the pipeline.

For marketing operations teams with access to development resources — or evaluating whether to build internal tooling — this pattern represents the target state for recurring list-cleaning workflows. The manual version using sequential custom GPT uploads is viable as an interim approach; the agent pipeline removes the last remaining manual steps.

"What was a series of custom GPTs can now actually be chained together into a complex workflow, all the way to that very last step which is uploading into Marketo."

LLM-Derived Persona Fit Scores Could Replace Static MQL Thresholds — But Implementation Detail Is Still Nascent

A presenter outlined two extensions of the job level classification pattern: LLM-based job role classification mapped to internal persona definitions, and a composite persona fit score that combines job level, job role, and firmographic data to produce a qualitative ICP signal. The job role classification follows the same prompt-based pattern as job leveling — provide a title, receive a functional category (IT, procurement, engineering, etc.) — with the added capability to handle non-English titles inline. The output creates new segmentation dimensions in Marketo that go beyond what keyword-based smart lists can practically maintain.

The persona fit score concept is the more ambitious idea: rather than binary MQL thresholds based on behavioral scoring alone, an LLM evaluates a lead against a company-specific persona definition using job level, job role, and firmographic inputs and returns a fit signal (strong fit, weak fit). This signal could then layer into lead routing logic or MQL promotion criteria alongside traditional behavioral scores. The framing positions it as a way to move from tactical list management to strategic pipeline contribution.

The session stayed at the conceptual level for these bonus ideas — no prompts, example outputs, or implementation steps were shown. Practitioners interested in the persona fit score pattern will need to develop their own prompt structure and validate output quality before integrating it into routing or scoring logic.

"You can have the LLM take a particular lead contact and based on just the job title and some firmographic information about the company come back with a persona — like this is a strong fit for a persona or it's a weak fit — and from those scores you can then layer on whatever your lead routing rules are."

Summarised from Adobe Marketo Engage User Groups · 37:11. All credit belongs to the original creators. Streamed.News summarises publicly available video content.