Original source: Adobe Marketo Engage User Groups

This article is an editorial summary and interpretation of that content. The ideas belong to the original authors; the selection and writing are by Marketo Ops Radar.

This video from Adobe Marketo Engage User Groups covered a lot of ground. 4 segments stood out as worth your time. Everything below links directly to the timestamp in the original video.

If your team is still choosing a deduplication approach by gut feel, this maturity ladder gives you a structured framework to match method to scale — and flags the activity-history gotcha that catches bulk-merge users off guard.

A Four-Tier Deduplication Maturity Ladder for Choosing the Right Merge Approach

A recurring challenge in Marketo operations is matching deduplication method to database scale — and a framework shared in this session structures that decision as a four-tier maturity ladder: manual merging in Marketo UI for small, sensitive duplicate sets; bulk Excel-based merging for volumes in the thousands; iPaaS-driven API automation for recurring or architecturally complex duplicate patterns; and Adobe's paid auto-merge professional service for large organizations without bandwidth to manage deduplication continuously. Each tier carries distinct tradeoffs around activity history preservation, control, and operational overhead.

A critical and frequently missed gotcha surfaces at tier two: bulk Excel merging does not preserve activity history for losing records. Practitioners who optimize for speed at this tier may inadvertently discard behavioral data that matters downstream. A separate caution applies to tier three — automating merge logic via API requires airtight winning-record determination logic before any programmatic merge runs at scale. Without that, the automation compounds errors rather than resolving them.

An equally important framing from the session: deduplication is not a one-time remediation event. If large-scale merge jobs are running monthly or quarterly, that signals a systemic upstream process issue rather than a volume problem. Sustainable deduplication practice looks more like routine maintenance — small, frequent interventions that prevent accumulation — than periodic emergency cleanups.

"if you do that programmatically, you don't have that. So basically, you have to have really waterproof logic on why you plug them together in that way. Um otherwise you're left with something that you didn't wish for in the beginning."

A Decision Matrix for When to Extend Marketo's Data Model — and When to Leave It Alone

A clean mental model presented in this session reframes the custom object versus custom activity decision as nouns versus actions: custom objects represent things that exist (enrollments, purchases, assets), while custom activities represent time-series events that happened (video views, badge scans, store visits). This framing cuts through architecture ambiguity and gives Marketo practitioners a fast first-pass filter before reaching for either extension mechanism.

The more operationally useful contribution is a three-part decision matrix for when to extend the data model at all. One-off data should not be persisted in Marketo in any form. One-to-one relationships belong on person fields. Complex, recurring, multi-value history — purchase records, product ownership, enrollment history — is the appropriate domain for custom objects. The session also flags a non-obvious debugging pattern: if a custom object record isn't appearing on a person, the cause is almost always a broken linkage chain rather than a data problem — visualizing whether a continuous line exists from person to custom object in the data model resolves most of these cases.

The broader argument is an AI-readiness one: a cluttered data model with low-relevance custom object records degrades the signal quality available for future intelligence layers. Keeping the model lean by refusing to persist data that won't see regular segmentation use is framed not as housekeeping but as forward architectural investment.

"if there is no line that draws from uh the person that you try to find in your smart list to the custom object custom object record it will probably not show up. So that's where data model design is important."

A 'Data in Transit' Pattern Using Key-Value Pairs and Velocity Scripting to Avoid Bloating the Data Model

A pattern discussed in this session addresses a common tension in Marketo architecture: how to send rich, complex data through a campaign send without persisting that data permanently in the data model. The approach uses text area fields as temporary containers for structured key-value data — including JSON or compact delimited formats — which Velocity scripting then parses at send time to populate email tokens. Marketo editors interact only with standard tokens and never need to understand the underlying structure, keeping the user-facing experience simple while the complexity is abstracted into reusable scripts.

A car configurator example grounds the pattern concretely: a prospect configures a vehicle with dozens of attributes — color, model, engine type, horsepower — and the system needs to send a highly personalized follow-up email. Persisting every configuration as structured records would generate thousands of custom object entries per day for data used only once. The transit pattern allows that rich personalization to flow through a single send and then be nulled, while only the strategically valuable data — whether a purchase was made, which model was bought — gets persisted into a custom object for ongoing segmentation.

Two operational risks accompany this pattern. First, person-level flex fields are shared across processes, creating overwrite exposure if multiple workflows touch the same field simultaneously. Migrating the transient value into a program member custom field as soon as it arrives on the person significantly reduces that risk. Second, the shorter the window between data arrival and send execution, the lower the cross-process collision risk. The 30-second rule introduced alongside this pattern provides a complementary usability heuristic: if an average Marketo user cannot construct a smart list filter for a given object structure within 30 seconds, the data model is too complex for day-to-day operational use.

"if a normal marketer can't build his smart list filter for this sad object structure in 30 seconds, it's too complicated."

Design Deletion Logic Before Creation Logic: The Orphaned Custom Object Problem

A key operational hazard surfaced in this session is one most practitioners encounter only after it becomes expensive: deleting a person record in Marketo does not delete linked custom object records. Those orphaned records remain in the system, continue to count against custom object limits, and are invisible in the UI but fully visible via the API. For teams managing large custom object volumes, this creates a silent accumulation problem that compounds over time and inflates counts in ways that are difficult to audit retroactively.

The recommended practice inverts the conventional design sequence: think through deletion logic before finalizing creation logic. When should a custom object record cease to exist? What event or condition should trigger its removal? Building that answer into the architecture at design time is significantly easier than retrofitting it after records have accumulated. The session frames hygiene not as a maintenance afterthought but as a feature — a deliberate design choice that preserves data model integrity and keeps AI context relevant.

This connects to a broader data model philosophy: Marketo is not a relational database in the sense that most users interact with it, and designing for the constraints of that user-facing layer — rather than for theoretical data model elegance — is the practitioner's core architectural responsibility. Keeping only data that serves active segmentation needs, and building deletion logic to match, is how instances remain usable as they scale.

"deleting the person does not delete the custom object. It would leave you with an orphan um that is not connected to a person. So managing your custom object count actively based on what makes sense when they shall be deleted because you don't need them anymore is a uh thing that you should think about at the time where you are starting to think about creating that custom object structures not when it's too late uh because ultimately you will not see them in the UI but the API will tell you they are there and they will count."

Also mentioned in this video

- AI data quality matters using the 'garbage in, garbage out' principle, covering… (5:47)

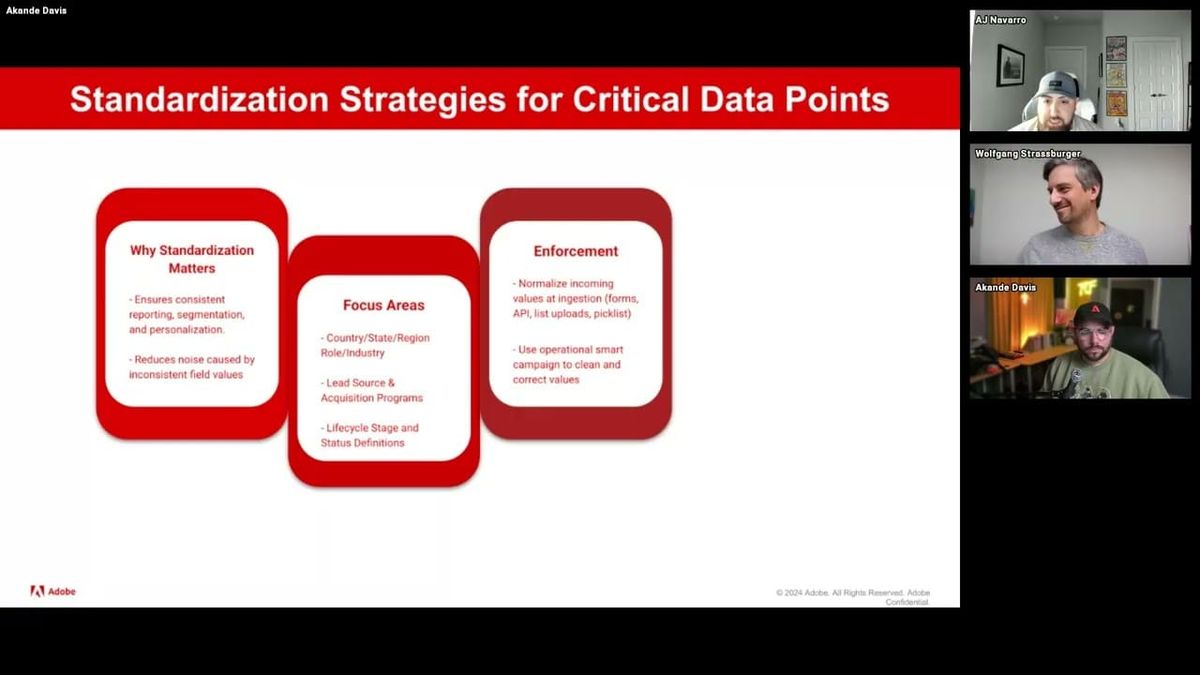

- Foundational database architecture best practices including standardized field… (14:17)

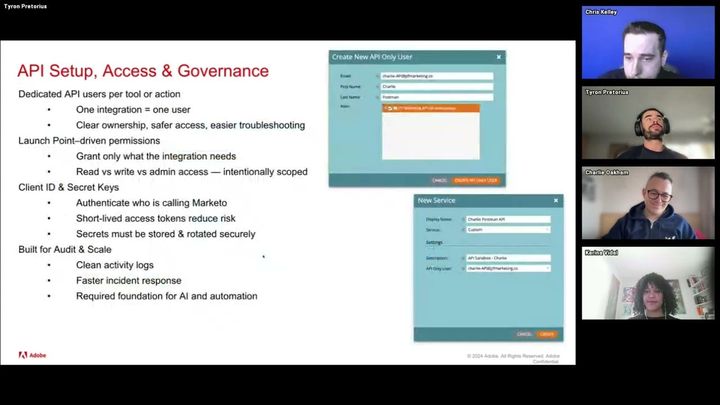

- AJ dives deeper into data governance, explaining how it prevents schema sprawl,… (22:58)

- The panel conducts a Q&A session addressing how to handle duplicate or related… (1:01:03)

Summarised from Adobe Marketo Engage User Groups · 1:11:45. All credit belongs to the original creators. Streamed.News summarises publicly available video content.