Discover the Power of ABM and Demandbase for Revenue Growth — Key Takeaways

If your intent model assumes LinkedIn research activity is visible to your ABM platform, it isn't — and that gap may be quietly distorting your account prioritization. The pattern discussed here also points toward a more complete churn-risk signal your team likely isn't using yet.

Adobe Marketo Engage User Groups | 20250121 | 1:25:39

This session from Adobe Marketo Engage User Groups covered a lot of ground. 5 segments stood out as worth your time. Everything below links directly to the timestamp in the original video.

Ingesting Product-Usage Data into an ABM Platform to Score Churn Risk and Cross-Sell Intent

Topic: integrations | Speaker: Matthew Cheeseman

A recurring gap in ABM implementations is the inability to incorporate post-sale behavioral signals — product usage, feature adoption, login frequency — into account scoring. A session discussion surfaced a practical pattern: where product telemetry lives outside the CRM or MAP (in an ERP, data warehouse, or CDP), it can be pulled into an ABM platform via an import API, allowing those usage signals to inform account-level scoring alongside intent and engagement data. The combined picture — an account showing low product adoption while simultaneously researching a competitor — becomes a high-confidence churn signal that neither system could surface alone.

On the intent data side, a question about LinkedIn research activity produced a clarifying answer that saves practitioners from a common false assumption: behavioral data generated within LinkedIn (searches, profile views, content engagement) is proprietary to LinkedIn and is not passed to third-party intent networks. Only data that a customer can explicitly export from LinkedIn and import separately would be available for account scoring. This is a meaningful constraint for teams building intent models that assume broad social research visibility.

For teams managing multi-product portfolios, the discussion also confirmed that buying groups can be constructed per product line by combining keyword sets mapped to each solution with URL-based segmentation of the vendor's own site. This allows product-specific intent signals to feed separate account journeys — a scalable pattern for organizations where a single ABM platform must serve multiple GTM motions simultaneously.

"some of the data on LinkedIn is tricky to get hold of as you'd imagine LinkedIn you know want to try and maximize their revenue since Microsoft purchased them for 20 plus billion they want to maximize that data and leverage that whatever data you can get out of LinkedIn from a company level and a company perspective you can bring that into Demandbase so the short answer is yes the long answer is it depends on what you can get from LinkedIn that you can then bring in and import into demand base"

— Matthew Cheeseman

Key takeaways:

- LinkedIn behavioral data (searches, engagement within the platform) is proprietary and not available to third-party intent networks — do not assume social research intent is captured.

- Product usage data from ERPs, data warehouses, or CDPs can be ingested into an ABM platform via import API, enabling churn-risk and cross-sell scoring models that combine usage signals with third-party intent.

- Buying groups for multi-product portfolios can be segmented per product line by mapping keyword sets and site URL patterns to each solution, enabling product-specific account journeys at scale.

- Combining low product adoption signals with competitor intent research in account scoring creates a high-confidence churn indicator that neither the MAP nor the CRM can surface independently.

Why this matters: If your intent model assumes LinkedIn research activity is visible to your ABM platform, it isn't — and that gap may be quietly distorting your account prioritization. The pattern discussed here also points toward a more complete churn-risk signal your team likely isn't using yet.

🎬 Watch this segment: 1:18:21

Reframing ABM Measurement: Why MQL Metrics Fail the Account-Based Model — and What to Track Instead

Topic: use-case | Speaker: Inga Keizare

A fundamental pattern discussed in this session is the measurement mismatch that occurs when teams adopt ABM strategy but retain lead-generation KPIs. One practitioner described the shift in concrete terms: replacing MQL volume and cost-per-MQL with opportunity count, ACV, win rate, deal velocity, and net revenue retention as the primary success metrics. The distinction between MQL (a single person engaging) and MQA (multiple contacts from the same account engaging) was framed as the structural inflection point — the MQA threshold is where ABM mechanics begin to differentiate from traditional lead-gen, and where marketing activity starts to reflect actual buying-group behavior rather than individual interest.

A practical failure story added real texture: a personalized campaign that generated zero form fills was initially judged unsuccessful, but two months post-campaign, accounts from the target list appeared in opportunity and MQL stages. The lesson — that ABM campaigns require an extended attribution window and that immediate post-campaign reporting is structurally misleading — was reinforced by a parallel observation about attribution hygiene: SDR-sourced opportunities were being miscategorized because manual process steps were not consistently followed, causing significant underreporting of ABM-influenced pipeline until a survey surfaced the discrepancy.

Personalization at the company level drove a measured 63% conversion rate improvement in specific campaign instances, and an estimated 468% ROI was cited for the full ABM program in 2023. Both figures carry methodological caveats — the ROI estimate in particular was framed as a renewal-time calculation rather than a rigorously controlled measurement — but they illustrate the order-of-magnitude case that experienced practitioners are making internally to justify continued ABM investment.

"We run a campaign with very small traffic and a high bounce rate, report it as unsuccessful — then come back five months later to find opportunities and customers closing from that targeted account list"

— Inga Keizare

Key takeaways:

- Replace MQL volume and cost-per-MQL with opportunity count, ACV, win rate, deal velocity, and net retention as the primary ABM success metrics.

- Define an MQA threshold (multiple contacts from the same account engaging) as the structural handoff point that distinguishes ABM from lead-gen — and build your funnel stages around it.

- Extend your attribution window well beyond campaign end dates; ABM influence on pipeline may not appear for two to five months after a campaign closes.

- Audit SDR opportunity attribution regularly — manual source-tagging processes degrade over time and will systematically underreport ABM-influenced pipeline.

- Company-level personalization of landing pages and ads can produce significant conversion lift, but measure it against a defined baseline to isolate the personalization effect.

Why this matters: If your team is still reporting ABM campaign performance the week after a campaign ends, you're almost certainly mischaracterizing what's working. The attribution patterns described here suggest your pipeline reporting window — and your source-tagging process — may need a structural rethink.

🎬 Watch this segment: 16:13

How One ABM Implementation Restructured the Marketing-Sales Relationship — and Resized Its Target Account Tiers

Topic: use-case | Speaker: Brittany Wolfe

A pattern that emerged in this session is that sustained ABM adoption tends to force organizational change rather than simply adding tooling. One marketing operations team described a multi-step evolution: starting as an enterprise marketing team, shifting to a revenue marketing model tied to opportunity metrics rather than MQL volume, and ultimately consolidating into a unified GTM team where marketing and sales share the same goals and reporting structure. The framing was explicit that ABM was a contributing factor but not the sole driver — intentional meeting cadences, shared goal-setting, and ongoing communication with sales were equally necessary.

On the Marketo integration question, the practical detail was that engagement-minute scoring — derived from email opens and clicks tracked in the MAP — flows into the ABM platform to contribute to account-level engagement scores. The point made was that without a MAP feeding behavioral signals at the person level, account-level scoring would be limited to anonymous web traffic, which is materially less useful for prioritization. The integration is less about changing how Marketo is used and more about ensuring Marketo's existing activity data is properly surfaced at the account level in the ABM tool.

On tier sizing, one team's experience offered a useful calibration benchmark: tier-one ABM (high-touch, one-to-one) was tested and ultimately abandoned because deal sizes did not justify the investment required to coordinate personalized outreach across sales, marketing, and customer success. Tier-two campaigns operated with lists of roughly 100–150 accounts at a time. What might be considered tier-three was handled as a series of one-to-many programs — industry-specific campaigns or integration-announcement campaigns targeting companies using a specific technology — rather than a standing account list.

"Without Marketo I don't think Demandbase would work as well. Maybe it doesn't have to be Marketo — it could be something else like HubSpot — but without that insight we're getting from Marketo as far as lead scoring goes, and having all those attributes we're feeding into Demandbase to score the accounts, I don't know how it would work otherwise, other than just getting website traffic and website visitors."

— Brittany Wolfe

Key takeaways:

- ABM adoption is more likely to succeed when marketing is measured on opportunity and revenue metrics shared with sales, not on MQL volume — the metric change often precedes or drives the organizational change.

- Engagement-minute data from email activity in your MAP is a meaningful input to account-level scoring in an ABM platform; ensure your integration is configured to pass this signal correctly.

- Tier-one ABM requires deal sizes that justify the coordination overhead across sales, marketing, and customer success — validate this assumption before investing in one-to-one programs.

- Tier-two campaign lists in the 100–150 account range appear to be a practical operating ceiling for teams without dedicated ABM headcount.

- One-to-many ABM programs work well when defined by a specific technographic or product-integration trigger rather than a standing tiered list.

Why this matters: If your ABM tier structure was designed before you knew your actual deal economics, the sizing may be wrong — and one team's experience of abandoning tier-one entirely is a useful data point. The Marketo integration detail here is also worth checking: engagement-minute data flowing correctly into account scoring is a dependency that's easy to misconfigure.

🎬 Watch this segment: 32:05

How IP-Based Account De-Anonymization and Product-Interest Heat Maps Can Trigger Marketo Campaign Enrollment

Topic: integrations | Speaker: Matthew Cheeseman

A live product demonstration surfaced several mechanics worth understanding for Marketo practitioners evaluating ABM tooling. The core architecture is IP and device-ID based account identification: anonymous website visitors and publisher article readers are mapped back to company identity through a maintained account identification database, allowing anonymous engagement to be attributed at the account level rather than the individual level. This resolves a structural blind spot in MAP-only setups, where engagement data exists only for cookied, known contacts — typically those who have already submitted a form. The practical implication is that buying-group research behavior happening before form fill becomes visible as an account-level signal.

The lead-to-account matching layer aggregates all Marketo activities — email clicks, opens, form submissions, content engagement — under a single account record, combining them with anonymous web visit data and third-party intent keyword signals. The resulting product-interest heat map assigns engagement intensity per account per product line, enabling automated enrollment into the relevant Marketo nurture stream or ad campaign without manual segmentation. The automation trigger pattern is: account reaches a defined engagement threshold for a specific product signal → account and associated contacts are automatically added to the corresponding Marketo campaign, Salesforce task, or LinkedIn ad audience.

The journey-stage framework demonstrated here maps directly onto existing Marketo lifecycle thinking but operates at the account level, with stage definitions driven by combinations of intent signal recency, anonymous site activity, and known-contact engagement. The velocity reporting — tracking how many accounts advance between stages over a given period — provides a pipeline-leading indicator that is particularly useful in long sales cycles where closed-won revenue attribution is too lagged to guide in-period decisions. Journey stages are configurable, can be renamed, and can be mapped to CRM opportunity stages to preserve continuity with existing reporting.

"what we are doing here is we are leveraging the IP address and other IDs that we utilize to build what we call our account identification database and it sounds a little creepy but that is our ability to ultimately track users across the web but actually map that back to the company that they work for"

— Matthew Cheeseman

Key takeaways:

- IP and device-ID based account identification surfaces anonymous buying-group research activity that is invisible in MAP-only setups — this is the core data gap ABM tooling addresses.

- Lead-to-account matching aggregates all MAP activity under a single account record, enabling account-level engagement scoring that combines known-contact behavior with anonymous signals.

- Product-interest heat maps can automate Marketo campaign enrollment based on account-level engagement intensity per solution, eliminating the need for manual segmentation across large target account lists.

- Account-based journey stages with configurable definitions provide a pipeline-leading metric (stage velocity) that is more actionable than closed-won revenue in long sales cycles.

- Website personalization triggered by account identification can be delivered at scale without engineering involvement, extending the impact of Marketo form-based personalization to anonymous account visitors.

Why this matters: The gap between what your Marketo instance knows about an account and what that account is actually doing on your website and across the web is larger than most practitioners realize. The mechanics demonstrated here show specifically how that gap can be closed — and how to wire the resulting signals back into Marketo campaign enrollment automatically.

🎬 Watch this segment: 53:45

Get the Process Before the Platform: One ABM Team's Honest Retrospective on Sequencing an ABM Program

Topic: use-case | Speaker: Brittany Wolfe

A practitioner who built an ABM program from scratch shared a retrospective that led with a clear sequencing mistake: procuring the ABM platform before validating sales team commitment and process readiness. The honest framing — 'I really fought to get the tool first' — is a useful counterweight to the vendor-led implementation narrative. The downstream problem was predictable: sales alignment issues that should have been caught during process design instead surfaced after the contract was signed.

The recommended alternative is a low-cost pilot: agree on 10 accounts with sales, run targeted and personalized activities across those accounts using existing tools (ads, email, events, gifting), and use the results to assess whether sales will actually follow through on their stated commitments before investing in dedicated ABM tooling. The caveat raised in the following segment — that 10 accounts is a statistically thin test pool with binary success/failure outcomes — is worth holding alongside this recommendation, particularly in longer sales cycles where results may not be visible within the pilot window.

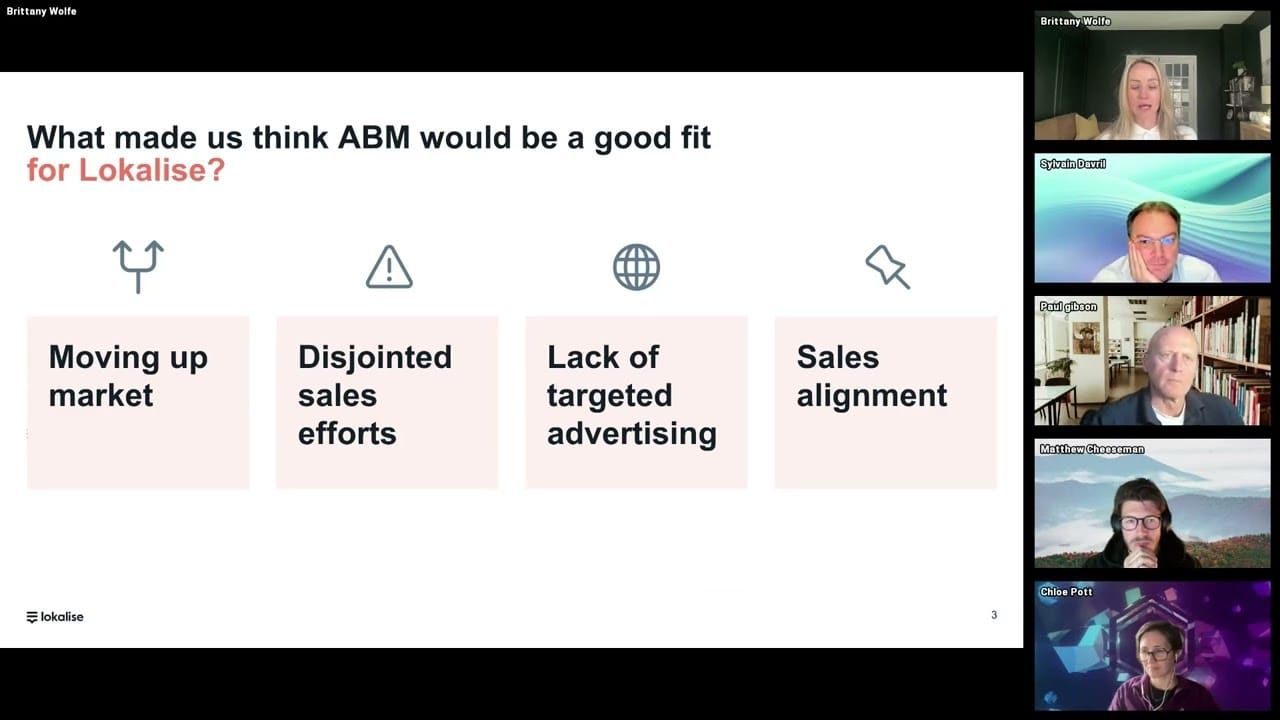

The business drivers behind the ABM decision were consistent with common patterns: a move upmarket, a large and uncoordinated outbound team with no intent data to prioritize accounts, and marketing and sales running entirely separate account lists with no coordination. The program was built multi-channel from the outset — ads, outbound, events, email, gifting — with the explicit framing that channel mix should be calibrated to what is operationally feasible for the organization rather than following a prescribed ABM playbook.

"I would really recommend doing a test if you're going to try ABM — just pick 10 accounts that the sales team agrees they would want to go after, run some very targeted and personalised ads towards them, do some engagement activities like webinars or gifting campaigns, to really see: if we go all in and focus on these 10 accounts, are they going to move through the funnel, are they going to close faster, and is the sales team actually going to do what they say they're going to do?"

— Brittany Wolfe

Key takeaways:

- Validate sales team commitment and account-level process alignment before purchasing an ABM platform — misalignment discovered post-contract is more costly to correct.

- Run a manual pilot on a small, agreed-upon account list using existing tools before investing in dedicated ABM tooling to surface process gaps and test sales follow-through.

- Recognize that a 10-account pilot has statistical limitations, especially in long sales cycles — build in enough time window to see meaningful signal before drawing conclusions.

- ABM channel mix should be calibrated to what is operationally feasible for the team, not to an idealized multi-channel playbook — start with the channels you can actually execute.

- Disjointed outbound efforts with no shared account prioritization data are a strong leading indicator that an ABM motion will address a real structural problem, not just a tooling gap.

Why this matters: The tool-before-process mistake is common enough that hearing it named directly — and with specific consequences — is worth sharing with any MOps team currently being asked to evaluate ABM platforms under leadership pressure.

🎬 Watch this segment: 5:41

Content summarized from publicly available MUG recordings. Not affiliated with Adobe. Summaries reflect my interpretation — always validate before implementing in your environment.

This is a personal project by JP Garcia. I work at Kapturall but this publication is independent and not affiliated with or endorsed by my employer. All credit belongs to the original speakers and Adobe Marketo Engage User Groups. I curate and link back to source — I never re-upload or reproduce full sessions. Full disclaimer →